Why Most Companies Fail at Data Quality and What Rules You Should Actually Apply at Each Layer

Let me be honest with you

I have been working in data since 2013. From classic SQL Server BI stacks (SSIS pipelines, SSAS cubes, DAX) to a full shift in 2020 into modern data engineering on Google Cloud: BigQuery, dbt, Airflow, Great Expectations, Looker. Over a decade across consultancy projects, in multiple industries, multiple countries, multiple tech stacks.

And after working with roughly 20 clients across that career, I can count on one hand how many of them had a serious, systematic data quality framework in place.

Two. Maybe two and a half, if I'm generous.

That number still bothers me. Why companies are not investing properly on data quality?

The problem nobody wants to say out loud

Here is what I saw repeatedly: a business team that did not trust their own data.

I would sit in a meeting, someone would pull up a dashboard, and the first thing a stakeholder would say was "but is this number right?". Not "what does this tell us?". Not "what should we do?". Just "is this right?".

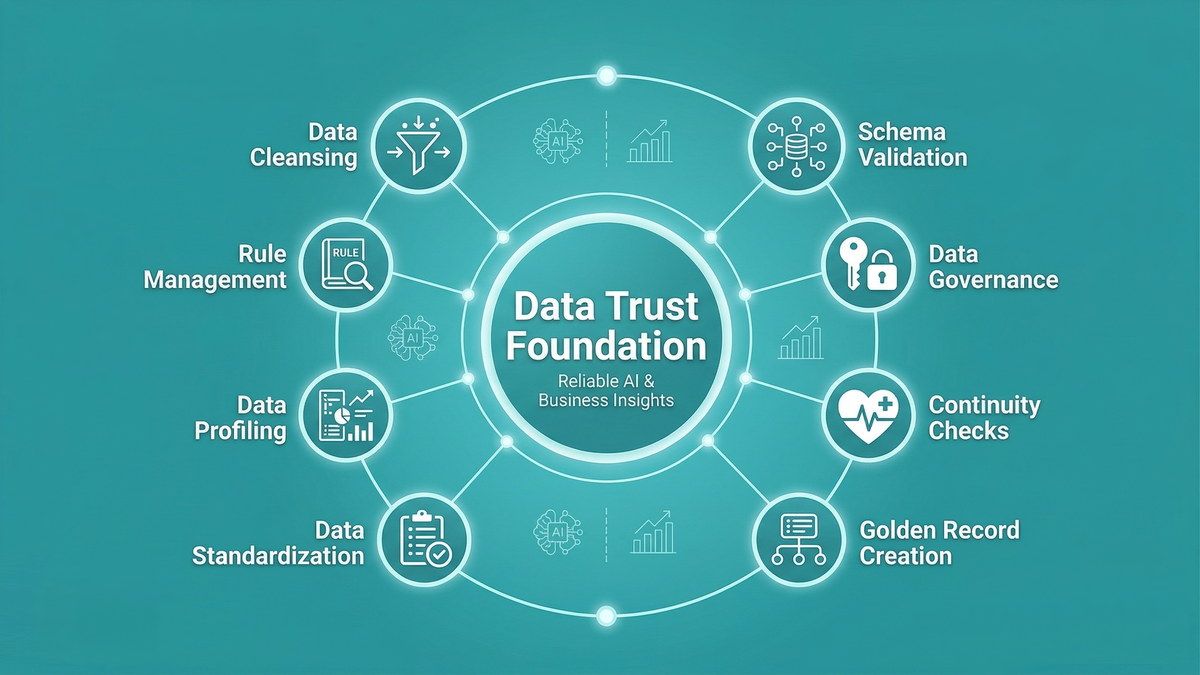

That question is a symptom of a broken foundation. When your business users are spending cognitive energy doubting the data instead of acting on it, you have a trust problem. And trust problems are data quality problems in disguise.

What makes this worse is that most of these companies were simultaneously pitching AI initiatives to their leadership. "We want to build a recommendation engine." "We want to predict churn." "We want a Copilot that answers questions about our sales data."

But if a human analyst looks at a number and asks is this right?, what do you think an AI agent is going to do with that same data? It will confidently produce a wrong answer. And nobody will know.

You cannot build AI on top of a data estate you don't trust. Quality is not a nice-to-have. It is the foundation.

So why does this keep happening?

This is the question I have been asking myself for years. It is not a tooling problem, the ecosystem is extraordinary with dbt, Soda, Great Expectations, Monte Carlo, Bigeye, Microsoft Fabric's own built-in observability features. The tools exist, they are mature and many of them are free or low cost to start with.

It is not a knowledge problem either. The Medallion architecture (or similar) has been mainstream long enough that most data engineers can describe it in a job interview.

From what I have seen, it usually comes down to three things:

1. Data quality is treated as a phase, not a practice. Teams plan to "add data quality later, once the pipeline is running (like documentation but that's another problem)." Later never comes. The pipeline ships, the stakeholders start using it, and refactoring it to add quality checks feels risky and expensive.

2. Nobody owns it. Data engineers think it is an analytics problem. Analytics engineers think it is a data engineering problem. Data owners in the business think it is an IT problem. The result is nobody does it seriously.

3. There is no clear framework for what to check and where. Even teams that want to implement data quality get stuck because they do not know what rules belong at Bronze versus Silver versus Gold. They either over-engineer ingestion with complex business rules, or they leave Gold completely unchecked and wonder why reports are wrong.

That third problem is the one I want to solve in this post.

The Medallion architecture - a quick reset

If you are new to this pattern: the Medallion architecture organises data into three progressive layers of quality and refinement.

- Bronze - raw data, as close to the source as possible. Ingested but not cleaned.

- Silver - conformed, deduplicated, and standardised data. Business rules applied. Ready for analytical use.

- Gold - aggregated, business-ready data products. Optimised for consumption by reports, dashboards, ML models, and AI agents.

Each layer has a different purpose, and therefore a different set of quality checks that belong there. This is the part most teams get wrong. They apply the same kind of validation everywhere, or they try to enforce business rules at ingestion and wonder why the pipeline breaks when the source system changes.

The rule I follow is simple: validate what the layer promises, nothing more and nothing less.

Bronze - protect the raw data

Bronze is your historical record. Its job is to land data from the source system intact. That's it. You are not cleaning here, you are not transforming, you are not enforcing business rules.

But "raw" does not mean "anything goes." There are checks that absolutely belong at this layer, and they are all about structural integrity and completeness.

What to check at Bronze

Schema validation: This is the one I see ignored most often, and it causes the most downstream pain. When a source system adds a column, renames a field, or changes a data type, your pipeline should know and alert. Not silently break three layers down.

At Bronze, validate that the schema matches what you expect: column names, data types, and column count. If the source sends you something different, flag it immediately. Do not let schema drift flow silently into Silver.

In dbt, you can enforce this with dbt-expectations or contract testing. On Microsoft Fabric, you can use alerts or define your Lakehouse table schema explicitly and validate on load.

Row count and volume checks: A sudden drop in row count is often the first sign that something has gone wrong upstream like a broken connector, an API rate limit, a source system outage. At Bronze, track the volume of each load and alert if it falls below a threshold relative to historical averages.

This is not about business logic. It is about knowing that data arrived.

Null checks on primary keys and technical identifiers: Not business keys but technical ones. The source record ID, the event timestamp, the partition key. If these are null, the record is practically unprocessable and should be flagged or quarantined at ingestion.

Duplicate detection (soft): At Bronze, you are not deduplicating, that belongs in Silver. But you should detect and log duplicates from the source. This tells you whether the source system has a data problem, which is valuable information to pass back to the data producer.

Freshness: Is the data arriving on schedule? A Bronze table that has not been updated in 48 hours when it should refresh every hour is a quality issue even before you look at a single row.

What does NOT belong at Bronze

- Business rule validation (e.g., "status must be one of ACTIVE, INACTIVE, PENDING")

- Cross-table consistency checks

- Aggregation-level assertions

- Any transformation logic

If you enforce business rules at Bronze, you will reject records every time the source system introduces a new valid value. Bronze should be resilient. Let the data in, flag structural issues, and let Silver do the business validation.

Silver - enforce the contract

Silver is where the real quality work happens. This is where you transform raw data into something an analyst can actually trust. The checks here are about conformance, consistency, and business rule compliance.

By the time a record reaches Silver, you have already confirmed it arrived structurally intact from Bronze. Now you need to confirm it is semantically correct.

What to check at Silver

Referential integrity: Does every order_id in your orders table have a corresponding record in the customers table? Does every product_id exist in your product catalogue? Broken foreign key relationships are one of the most common sources of silent data quality issues. Rows that technically load but produce wrong JOIN results in Gold.

Domain validation (accepted values): This is where business rules live. If your source system sends a payment_status of "REFUNDED_PARTIAL" and your Silver model only accepts "PAID", "REFUNDED", or "PENDING", that is a Silver-level failure. The record should be flagged and routed to a quarantine table, not silently dropped.

Accepted value lists should be maintained as seed tables or configuration files, not hardcoded in SQL. They change, and you want to change them in one place.

Date and range logic: end_date should not be before start_date. age should not be negative. order_quantity should not be zero for a completed order. These are cross-column consistency checks that cannot be done at Bronze because they require understanding the business semantics of the data.

Deduplication: At Silver, you deduplicate. Define your deduplication strategy explicitly: which key uniquely identifies a business entity, and which version of a duplicate record do you keep (latest by timestamp? highest confidence score?). This strategy should also be documented, not just implemented in a CTE that someone added two years ago.

Standardisation checks: After standardising phone numbers, email addresses, country codes, and currency fields, validate the output. It is not enough to apply a transformation, you need to assert that the transformation produced valid results. A phone number normalisation function that silently produces nulls for 15% of records is not quality, it's an hidden problem.

Completeness thresholds: Not every null is an error, but unexpectedly high null rates often are. Define acceptable null thresholds for important columns, and alert when they are breached. If customer_email is null for 40% of records this week when it was 5% last week, something changed upstream.

The quarantine pattern

One of the most important architectural decisions for Silver is what you do with records that fail quality checks. My strong recommendation: do not drop them, and do not let them through unchecked.

Route failing records to a quarantine table - silver_orders_quarantine - alongside a reason code and timestamp. This gives you:

- An audit trail of exactly what failed and when

- The ability to reprocess records once the upstream issue is fixed

- Visibility for the data producer to understand what their system is sending

The quarantine table is also excellent for building trust with business stakeholders. Instead of saying "some records were dropped," you can show them exactly which records failed, why, and what the plan is to recover them.

Gold - assert the business promise

Gold is your data product layer. Reports, dashboards, ML features, and AI agents consume from here. The checks at Gold are not about individual records anymore, they are about aggregate correctness and business expectations.

Gold quality checks are the closest thing to a contract with your business users.

What to check at Gold

Business metric assertions: If you know that total monthly revenue should not vary by more than 20% from the previous month without an exceptional event, test for that. If your daily active users count should never be higher than your total registered users count, assert it. These are things a human analyst would notice in a review, so makes sense to automate them.

Aggregation consistency: The sum of line-item totals should equal the order total. The sum of daily sales should equal the monthly summary. The count of orders in your fact table should match the count in your operational report. These cross-layer consistency checks matter a lot and are almost never implemented.

SLA freshness: Gold tables have consumers. Those consumers have expectations. If a dashboard is supposed to show yesterday's data and your Gold table is three days stale, that is a quality failure even if every row is technically correct. Freshness at Gold is a service level commitment.

Referential completeness for dashboards: Every dimension referenced in your fact table should have a matching row in the dimension table. Missing dimension members mean your dashboard will show NULLs or simply drop rows from aggregations. This is one of the most common causes of the "why don't the numbers add up?" conversation.

What does NOT belong at Gold

- Re-running Bronze or Silver checks. If something failed at Silver and somehow made it to Gold, that is a pipeline governance problem, not a Gold-layer check.

- Row-level transformations. Gold should be read-optimised, not transformation-heavy.

- Complex cleansing logic. If you are doing heavy data manipulation in Gold, you have likely misallocated logic from Silver.

Putting it all together

Here is the framework I use when setting up a new data platform, summarised as a quick reference:

| Check type | Bronze | Silver | Gold |

|---|---|---|---|

| Schema validation | ✅ Critical | ✅ Post-transform | - |

| Row count / volume | ✅ Alert on drop | ✅ Pre/post dedup | - |

| Primary key nulls | ✅ Source PKs | ✅ Business keys | - |

| Uniqueness | ⚠️ Detect only | ✅ Enforce | ✅ Aggregation keys |

| Accepted values | - | ✅ Full enforcement | - |

| Referential integrity | - | ✅ Critical | ✅ Fact-to-dim |

| Cross-column logic | - | ✅ Business rules | - |

| Freshness | ✅ Arrival SLA | ✅ Transform SLA | ✅ Consumer SLA |

| Completeness threshold | ✅ Technical cols | ✅ Business cols | - |

| Aggregation consistency | - | - | ✅ Critical |

| Business metric variance | - | - | ✅ Critical |

| Quarantine routing | ✅ Optional | ✅ Recommended | ✅ Alert only |

The mindset shift

The teams I have seen do this well share one thing in common: they treat data quality as an ongoing practice, not a one-time project. They have checks running in every pipeline run. They have a process for handling failures. They review quality metrics as part of their regular team rituals. And critically, they share quality dashboards with the business, not just with engineering.

That last point matters more than most people realise. When a business stakeholder can see a data quality dashboard that shows "99.2% of records passed all Silver checks this week, 23 records were quarantined due to missing customer ID (and a link to view them)" then trust is built. The conversation shifts from "is this number right?" to "what should we do with this?".

That is the goal. Not perfect data.. there is no such thing. But transparent, well-understood, trustworthy data.

Your AI initiatives, your dashboards, your executive reports they all sit on top of this foundation. Get the foundation right first.

If any of this resonates or if you disagree with where I've drawn the lines, I'd love to hear from you in the comments. This is a living framework that has evolved over a decade of real projects, and it keeps evolving.

I'm a Microsoft Fabric Practice Lead and data engineering consultant with 13 years of experience across BI, cloud data platforms, and analytics engineering. Currently building on Microsoft Fabric.

Follow on LinkedIn · Subscribe to cloudingdata.ai