FabCon 2026: What Actually Matters for Data Engineers and Tech Leads

Atlanta delivered. Now comes the hard part.

FabCon 2026 in Atlanta was the biggest one yet - over 8,000 attendees, co-located with SQLCon for the first time, and a keynote that made it clear Microsoft is no longer positioning Fabric as just an analytics platform. The message from the main stage was blunt: Fabric is the control plane for your entire data estate.

I came into this as someone who spent years building on Google Cloud - BigQuery, dbt, Airflow, the full modern data stack.. and recently made the move into Microsoft Fabric as a Practice Lead. So I am watching these announcements with a slightly different lens than someone who has been in the Microsoft ecosystem their whole career. I am genuinely excited about several things. I also think some of the headlines deserve a closer look before teams start redesigning their architectures.

This post breaks down what I believe actually matters from FabCon 2026, split into what it means for data engineers building pipelines day to day, and what it means for tech leads and architects making platform decisions.

Let me start with the announcements themselves, then we can talk about what to do with them.

The headline announcements

Fabric Data Agents - now Generally Available

Data Agents are AI-powered assistants grounded in your own data - lakehouses, semantic models, and data warehouses in OneLake. They went GA at FabCon, which means they are no longer an experimental feature. You can build and deploy them in production.

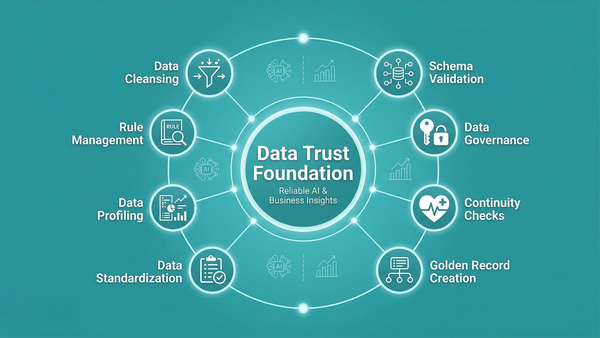

The key word here is grounded. These are not general-purpose chatbots. They reason over your data using the semantic context you have defined - which means they are only as good as your data model and your data quality. More on that implication later.

OneLake Security - now Generally Available

This one might be the most practically impactful announcement for data engineering teams, even though it did not get the flashiest demo.

Until now, Fabric had a fragmented security model. You would define access in Spark, then separately in the SQL Analytics Endpoint, then separately in Power BI. Three places, three sets of rules, and hope they stayed in sync.

OneLake Security unifies all of that. You define roles once - with row-level and column-level controls - and they are enforced automatically across every Fabric engine: Spark notebooks, SQL Analytics Endpoint, Power BI Direct Lake, and now Eventhouse (KQL) as well. The permission follows the data, not the tool.

I will cover this subject in upcoming blog posts.

Fabric IQ - in Preview

Fabric IQ is the most strategically significant announcement, and also the one I want to be most careful about overhyping.

The pitch is a semantic intelligence layer that sits above OneLake - combining Power BI's semantic model technology with Fabric's graph capability to give AI agents a business-contextual understanding of your data. Think of it as the layer that teaches your agents what "revenue" actually means in your organisation, not just what the column is named.

It is currently in preview, and Microsoft was light on implementation details at the keynote level. The vision is compelling. We need to watch how it matures.

Direct Lake on OneLake - now Generally Available

Direct Lake has always been the performance story for Power BI on Fabric - query data directly from OneLake at import-class speed, no scheduled refresh, no data duplication. What changed with GA is that it now supports cross-item modeling (multiple Fabric artifacts in one semantic model) and fully enforces OneLake Security policies. This closes two of the biggest gaps that held teams back from adopting it in production.

Database Hub - Early Access

A unified management layer for databases across Azure SQL, Cosmos DB, PostgreSQL, SQL Server via Azure Arc, MySQL, and Fabric databases - all accessible from within the Fabric portal. This is about consolidating operational and analytical workloads under one roof, not just analytics.

Fabric Local MCP - Generally Available

An open-source local server that allows AI coding assistants - GitHub Copilot, Claude, Cursor - to connect securely and directly to Fabric. This is genuinely useful for developer productivity right now.

What this means for data engineers

Let me be specific here, because "Fabric is evolving" is not actionable advice.

OneLake Security GA changes how you should design access from day one

If you are starting a new Fabric project today, there is no longer a reason to manage permissions separately per workload. Design your security model once in OneLake Security and let it propagate. This means:

- Define your data access roles at the OneLake level before you build your semantic models or SQL endpoints

- Row-level and column-level security should be part of your data model design, not an afterthought added before go-live

- If you have existing Fabric workloads, start planning migration to OneLake Security - the fragmented model is effectively deprecated now

This is a real quality-of-life improvement for data engineers who have been managing security in multiple places. It also reduces the risk of access mismatches between engines.

Direct Lake on OneLake GA means the semantic model conversation changes

For analytics engineers working on semantic models, the combination of Direct Lake GA and OneLake Security GA removes the two main excuses not to adopt it. You now have:

- Import-class query performance without a refresh cycle

- Security enforcement that works consistently whether a user accesses data through Power BI, SQL, or a Fabric data agent

- Cross-artifact modeling, so you can build a semantic model that spans multiple lakehouses or warehouses

If you are still building Import mode models on Fabric because Direct Lake "had limitations" - those limitations are largely gone. Worth revisiting.

Fabric Data Agents GA raises the bar on data quality

This is the one I feel most strongly about as someone who just wrote an entire post on why data quality is under-invested.

Data Agents are grounded in your OneLake data. That means if your Silver layer has inconsistent business keys, your Gold layer has metric calculation errors, or your semantic model has ambiguous measure definitions - the agent will reason over that noise and produce unreliable answers. Confidently.

GA means teams will start deploying these in production. The pressure to demonstrate AI capabilities to business stakeholders is real. But if the data foundation is not solid, agents will erode trust faster than they build it.

If you are being asked to build a Data Agent for your organisation, my recommendation is to treat it as a forcing function to audit your data quality first. The questions an agent will surface - "why is revenue different in these two reports?" - are the same questions your analysts have been quietly avoiding for years.

Fabric CLI and MCP mean CI/CD and developer tooling are finally maturing

The Fabric CLI and Fabric Local MCP are smaller announcements but they matter for day-to-day engineering work. Being able to manage workspaces, lakehouses, and pipelines from the command line - and having AI coding assistants connect directly to Fabric - closes a gap that has made Fabric feel less developer-friendly than competing platforms.

If your team is not yet using Git integration and deployment pipelines in Fabric, now is a good time to start building that discipline. The tooling is mature enough.

What this means for tech leads and architects

The "analytics platform" framing is officially over

Microsoft's positioning at FabCon was unambiguous: Fabric is the control plane for the entire enterprise data estate - not just the analytics layer sitting on top of operational systems.

The Database Hub, the expansion of OneLake mirroring to cover Oracle, SAP, SharePoint, and more, the convergence with SQL Server 2025 - all of it points in the same direction. Microsoft wants Fabric to be where you manage databases, run analytics, govern data, and deploy AI agents. One platform, one governance model, one storage layer.

For tech leads evaluating Fabric, this has a real implication: the ROI case is no longer just "we can deprecate our data warehouse." It is "we can consolidate a significant portion of our data tooling under one platform and one license conversation."

Whether that is attractive depends heavily on your existing stack and your organisation's relationship with Microsoft licensing. But the architectural ambition is real.

Is Fabric enterprise-ready? My honest answer.

Yes - with qualifications.

The core platform - OneLake, Lakehouses, Data Warehouses, Data Factory, Power BI integration - is production-ready and battle-tested. There are Fortune 500 companies running serious workloads on it. The GA of OneLake Security removes one of the biggest remaining blockers for regulated industries.

Where I would still be cautious:

Fabric IQ is preview. The semantic intelligence layer that makes Data Agents actually reliable at scale is not GA. If your AI agent strategy depends on Fabric IQ, you are building on something that can still change significantly.

The platform is moving very fast. That is mostly a good thing - but it means your team needs to invest in staying current. What was a limitation six months ago may be resolved. What is GA today may have a better approach announced next quarter. Teams that treat Fabric as a static platform to learn once will struggle.

The integration story with non-Microsoft tools is improving but uneven. OneLake interoperability with Snowflake, the Iceberg support, the MCP integrations - these are genuine steps toward openness. But if your stack has deep commitments to Databricks, dbt Core, or GCP services, the integration friction is real and worth evaluating honestly.

The convergence of operational and analytical data is the real story

The most strategically significant thing happening in Fabric - and across the data platform market generally - is the breakdown of the boundary between operational and analytical systems.

OneLake mirroring means your Cosmos DB, your Azure SQL, your Postgres - they can all be queried in Fabric without ETL. The Database Hub brings operational database management into the same interface as your analytics workloads. Fabric Data Agents can operate across both.

This is not just a convenience feature. It changes the architecture of what is possible. Agents that can act on operational data in near real time, using analytical context from OneLake, with security enforced uniformly - that is a meaningfully different capability than what most organisations have today.

The question for tech leads is not "should we use Fabric?" but "how do we evolve our architecture to take advantage of this convergence without creating new dependencies we will regret?"

What I am watching closely

A few things I am tracking as someone actively building on Fabric:

Fabric IQ implementation details. The ontology-driven semantic layer is compelling in theory. I want to see how teams actually define and maintain these ontologies in practice, and how they handle the inevitable drift between the business's understanding of "revenue" and what is in the data model.

Data quality tooling on Fabric. The Fabric-native story is improving but still behind. I am watching whether the dbt-Fabric integration matures, and whether Soda or similar tools build deeper Fabric support.

OneLake as a true multi-cloud data foundation. The mirroring expansions and Iceberg support are genuine progress. But for organisations with data in AWS S3 or GCP, the shortcut story is still more limited than I would like.

My take

FabCon 2026 was not a year of incremental improvements. The GA of OneLake Security, Data Agents, and Direct Lake on OneLake - combined with the strategic repositioning of Fabric as a full enterprise data control plane - represents a genuine step change in the platform's maturity.

But I keep coming back to the same thing I said in my last post about data quality: the tools are not the limiting factor. Most organisations that struggle with AI on Fabric will not struggle because Fabric lacks a feature. They will struggle because their data model is unclear, their quality checks are inconsistent, and their semantic layer does not accurately reflect how the business thinks about its data.

The announcements at FabCon make a stronger foundation possible. Whether teams build on that foundation properly is a different question entirely.

If you are a data engineer or tech lead evaluating what to do next: start with OneLake Security and Direct Lake GA - those are production-ready improvements you can action now. Be excited about Data Agents and Fabric IQ, but be patient with them. And if someone in your organisation is asking to deploy an AI agent on Fabric data, make sure the data it will reason over is actually trustworthy first.

The platform is ready for more than most teams are currently asking of it. That is the real story from Atlanta.

I'm a Microsoft Fabric Practice Lead and data engineering consultant with 13 years of experience across BI, cloud data platforms, and analytics engineering. Currently building on Microsoft Fabric.

Follow on LinkedIn · Subscribe to cloudingdata.ai